Livermore Computing is making significant progress toward siting the NNSA’s first exascale supercomputer.

Innovative hardware provides near-node local storage alongside large-capacity storage.

Siting a supercomputer requires close coordination of hardware, software, applications, and Livermore Computing facilities.

Flux, next-generation resource and job management software, steps up to support emerging use cases.

A Laboratory-developed software package management tool, enhanced by contributions from more than 1,000 users, supports the high performance computing community.

LLNL researchers ran HiOp, an open-source optimization solver, on 9,000 nodes of Oak Ridge National Laboratory’s Frontier exascale supercomputer in the largest simulation of its kind to date.

Using explainable artificial intelligence techniques can help increase the reach of machine learning applications in materials science, making the process of designing new materials much more efficient.

The Lab’s workhorse visualization tool provides expanded color map features, including for visually impaired users.

Learn how to use LLNL software in the cloud. Throughout August, join our tutorials on how to install and use several projects on AWS EC2 instances. No previous experience necessary.

2023’s Developer Day was a two-day event for the first time, balancing an all-virtual technical program with a fully in-person networking day.

A research team from Oak Ridge and Lawrence Livermore national labs won the first IPDPS Best Open-Source Contribution Award for the paper “UnifyFS: A User-level Shared File System for Unified Access to Distributed Local Storage.”

The report lays out a comprehensive vision for the DOE Office of Science and NNSA to expand their work in scientific use of AI by building on existing strengths in world-leading high performance computing systems and data infrastructure.

LLNL CTO Bronis de Supinski talks about how the Lab deploys novel architecture AI machines and provides an update on El Capitan.

Splitting memory resources in high performance computing between local nodes and a larger shared remote pool can help better support diverse applications.

Lori Diachin will take over as director of the DOE’s Exascale Computing Project on June 1, guiding the successful, multi-institutional high performance computing effort through its final stages.

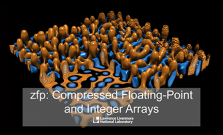

Unique among data compressors, zfp is designed to be a compact number format for storing data arrays in-memory in compressed form while still supporting high-speed random access.

Livermore CTO Bronis de Supinski joins the Let's Talk Exascale podcast to discuss the details of LLNL's upcoming exascale supercomputer.

Variorum provides robust, portable interfaces that allow us to measure and optimize computation at the physical level: temperature, cycles, energy, and power. With that foundation, we can get the best possible use of our world-class computing resources.

The addition of the spatial data flow accelerator into LLNL’s Livermore Computing Center is part of an effort to upgrade the Lab’s cognitive simulation (CogSim) program.

The Compiler-induced Inconsistency Expression Locator tool is recognized at ISC23

The Lab was already using Elastic components to gather data from its HPC clusters, then investigated whether Elasticsearch and Kibana could be applied to all scanning and logging activities across the board.

Computer scientist Vanessa Sochat talks to BSSw about a recent effort to survey software developer needs at LLNL.

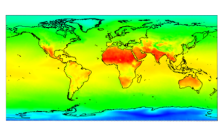

Supercomputers broke the exascale barrier, marking a new era in processing power, but the energy consumption of such machines cannot run rampant.

Open-source software has played a key role in paving the way for LLNL's ignition breakthrough, and will continue to help push the field forward.