Computer scientist Vanessa Sochat talks to BSSw about a recent effort to survey software developer needs at LLNL.

Supercomputers broke the exascale barrier, marking a new era in processing power, but the energy consumption of such machines cannot run rampant.

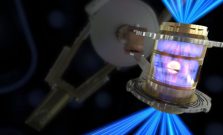

Open-source software has played a key role in paving the way for LLNL's ignition breakthrough, and will continue to help push the field forward.

While a thorough history has yet to be written of women in early computing in Livermore, their names and achievements are threaded through the archives. Here are some contributions of women who developed code during the Lab's early decades.

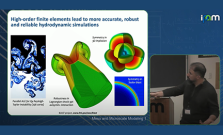

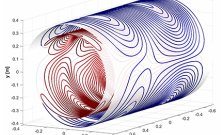

UCLA's Institute for Pure & Applied Mathematics hosted LLNL's Tzanio Kolev for a talk about high-order finite element methods.

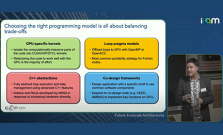

UCLA's Institute for Pure & Applied Mathematics hosted LLNL's Erik Draeger for a talk about the challenges and possibilities of exascale computing.

“I am delighted to be recognized by HPCwire,” Quinn said. “I feel the recognition has as much to do with the stature of Livermore Computing as the opportunity I’ve had to contribute. "

A glimpse into the career and contributions of a computing pioneer, Robert Hughes, and his role in developing FORTRAN.

This year, the DOE honored 44 teams including LLNL's Exascale Computing Facility Modernization Project team for significant power and cooling upgrades to support upcoming exascale supercomputers.

LLNL's popular lecture series, “Science on Saturday,” runs February 4–25. The February 18 lecture is titled "Supersizing Computing: 70 Years of HPC."

Computer scientist Johannes Doerfert was recognized as a 2023 BSSw fellow. He plans to use the funding to create videos about best practices for interacting with compilers.

Collaborative autonomy software apps allow networked devices to detect, gather, identify and interpret data; defend against cyber-attacks; and continue to operate despite infiltration.

Ending with one of the most significant achievements in scientific history, 2022 will be remembered as an important year for Lawrence Livermore National Laboratory (LLNL).

ACM named LLNL’s CTO for Livermore Computing Bronis R. de Supinski as a 2022 ACM fellow, recognizing him for his contributions to the design of large-scale systems and their programming systems and software.

A multidecade, multi-laboratory collaboration evolves scalable long-term data storage and retrieval solutions to survive the march of time.

High performance computing was key to the December 5 breakthrough at the National Ignition Facility.

Two supercomputers powered the research of hundreds of scientists at NNSA's Livermore National Ignition Facility, which recently achieved ignition.

The major scientific breakthrough decades in the making will pave the way for advancements in national defense and the future of clean power.

ASC’s Advanced Memory Technology research projects are developing technologies that will impact future computer system architectures for complex modeling and simulation workloads.

Combining specialized software tools with heterogeneous HPC hardware requires an intelligent workflow performance optimization strategy.

The 2022 International Conference for High Performance Computing, Networking, Storage, and Analysis (SC22) returned to Dallas as a large contingent of LLNL staff participated in sessions, panels, paper presentations and workshops centered around HPC.

In this issue: MFEM community workshops, compiler co-design, HPC standards committees, and AI/ML for national security

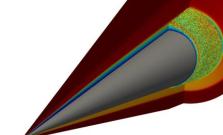

The award recognizes progress in the team's ML-based approach to modeling ICF experiments, which has led to the creation of faster and more accurate models of ICF implosions.

The second annual MFEM workshop brought together the project’s global user and developer community for technical talks, Q&A, and more.