The prestigious award is handed out every two years and recognizes outstanding contributions to the development and use of mathematical and computational tools and methods for the solution of science and engineering problems.

The latest issue of Science & Technology Review highlights the R&D 100 award–winning Flux software framework.

Science & Technology Review highlights the Exascale Computing Facility Modernization project that delivered the infrastructure required to bring exascale computing online in 2023.

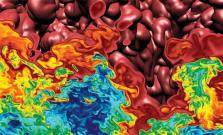

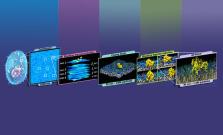

Two LLNL-led teams received SciVis Test of Time awards at the 2022 IEEE VIS conference for papers that have achieved lasting relevancy in the field of scientific visualization.

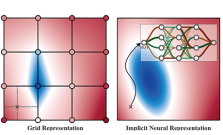

Researchers are starting a three-year project aimed at improving methods for visual analysis of large heterogeneous data sets as part of a recent DOE funding opportunity.

While LLNL awaits the arrival of El Capitan, physicists and computer scientists running scientific applications on testbeds are getting a taste of what to expect.

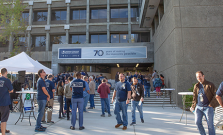

Employees gathered for the Lab’s first-ever Employee Engagement Day, held Oct. 11. The event featured food, drink, informative displays, historical films and more.

Climate change can bring not only heat, but also increased humidity, reducing the efficiency of the evaporative coolers many HPC centers rely on.

Researchers will address the challenge of efficiently differentiating large-scale applications for the DOE by building on advances in LLNL’s MFEM finite element library and MIT’s Enzyme AD tool.

The Earth System Grid Federation, a multi-agency initiative that gathers and distributes data for top-tier projections of the Earth’s climate, is preparing a series of upgrades to make using the data easier and faster while improving how the information is curated.

Preparing the Livermore Computing Center for El Capitan and the exascale era of supercomputers required an entirely new way of thinking about the facility’s mechanical and electrical capabilities.

The second article in a series about the Lab's stockpile stewardship mission highlights computational models, parallel architectures, and data science techniques.

The first article in a series about the Lab's stockpile stewardship mission highlights the roles of computer simulations and exascale computing.

The new oneAPI Center of Excellence will involve the Center for Applied Scientific Computing and accelerate ZFP compression software to advance exascale computing.

The Advanced Technology Development and Mitigation program within the Exascale Computing Project shows that the best way to support the mission is through open collaboration and a sustainable software infrastructure.

LLNL has signed a memorandum of understanding with HPC facilities in Germany, the United Kingdom, and the U.S., jointly forming the International Association of Supercomputing Centers.

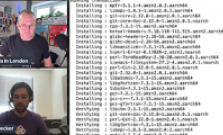

LLNL's Greg Becker spoke with HPC Tech Shorts to explain how Spack's binary cache works. The video “Get your HPC codes installed and running in minutes using Spack’s Binary Cache” runs 15:11.

Learn how to use LLNL software in the cloud. In August, we will host tutorials in collaboration with AWS on how to install and use these projects on AWS EC2 instances. No previous experience necessary.

Computer scientist Kathryn Mohror is among LLNL's recipients of the Department of Energy’s Early Career Research Program awards.

An LLNL team will be among the first researchers to perform work on the world’s first exascale supercomputer—Oak Ridge National Laboratory’s Frontier—when they use the system to model cancer-causing protein mutations.

Livermore’s machine learning experts aim to provide assurances on performance and enable trust in machine-learning technology through innovative validation and verification techniques.

In a presentation delivered to the 79th HPC User Forum at Oak Ridge National Laboratory, LLNL's Terri Quinn revealed that AMD’s forthcoming MI300 APU would be the computational bedrock of El Capitan, which is slated for installation at LLNL in late 2023.

This year marks the 30th anniversary of the High Performance Storage System (HPSS) collaboration, comprising five DOE HPC national laboratories: LLNL, Lawrence Berkeley, Los Alamos, Oak Ridge, and Sandia, along with industry partner IBM.

After 30 years, the High Performance Storage System (HPSS) collaboration continues to lead and adapt to the needs of the time while honoring its primary mission of long-term data stewardship of the crown jewels of data for government, academic and commercial organizations around the world.

An update on early and mid-career recognition award recipients, including Livermore Computing's own Todd Gamblin.