In this issue: contact mechanics, HPC optimization, quantum control, and reduced-order models

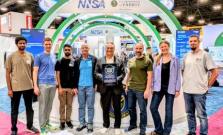

At SC25, LLNL earned multiple top honors across exascale computing, open-source software and real-world scientific applications, receiving four 2025 HPCwire awards and a Hyperion Research HPC Innovation Excellence Award.

LLNL’s presence, which included dozens of sessions, including tutorials, workshops, paper presentations and birds-of-a-feather meetings was felt across virtually every major event of the week.

Widely viewed as the highest recognition in HPC, the Gordon Bell Prize recognizes innovations that push the limits of computational performance, scalability and scientific impact on pressing real-world problems.

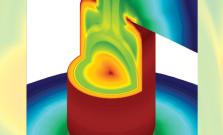

Researchers used the exascale supercomputer El Capitan to perform the largest fluid dynamics simulation ever—surpassing one quadrillion degrees of freedom in a single computational fluid dynamics problem.

El Capitan once again claimed the top spot on the Top500 List of the world’s most powerful supercomputers, announced today at the 2025 International Conference for High Performance Computing, Networking, Storage and Analysis (SC25) conference in St. Louis.

Scientists at LLNL and collaborators at AMD and Columbia University have achieved a milestone in biological computing: completing the largest and fastest protein structure prediction workflow ever run, using the full power of El Capitan.

The latest issue of LLNL's magazine marks the 20th anniversary of the Computing Grand Challenge.

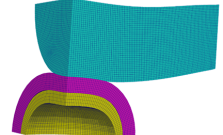

With the arrival of the exascale supercomputer El Capitan, Lawrence Livermore National Laboratory researchers are entering a new era of scientific simulation — one in which they can model extreme physical events with unprecedented resolution, realism and speed.

In a paper published in Science, Lawrence Livermore National Laboratory researchers detail how they used physics-informed deep learning and a cognitive simulation framework to forecast the success of the historic Dec. 5, 2022 fusion ignition shot, predicting a greater than 70% probability that it would exceed the energy breakeven point — producing more energy from the fusion reaction than the laser energy used to drive it.

LLNL is home to the world’s most complete set of ICF modeling and simulation tools, encapsulating the intricacies of laser light interaction, electron and x-ray transport, nonequilibrium atomic physics, magnetohydrodynamics, and fusion burn.

Scientists at LLNL have helped develop an advanced, real-time tsunami forecasting system—powered by El Capitan, the world’s fastest supercomputer—that could dramatically improve early warning capabilities for coastal communities near earthquake zones.

A unique laser optic design and two novel open-source software projects bring the Laboratory’s R&D 100 awards total to 182.

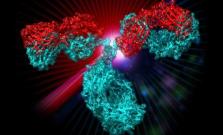

As described in a recent paper published by Science, a new cancer drug candidate developed by Lawrence Livermore National Laboratory, BBOT (BridgeBio Oncology Therapeutics) and the Frederick National Laboratory for Cancer Research has demonstrated the ability to block tumor growth without triggering a common and debilitating side effect.

Nicknamed SUM25, Spack’s first user meeting showcased new development and a thriving community.

LLNL's flagship exascale machine maintained its status as the fastest supercomputer on the planet—claiming the No. 1 spot on not just one, but three of the most prestigious HPC rankings.

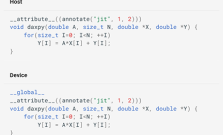

Research recognized at the HiPC IEEE conference proposes using an optimized version of OpenMP for vendor-agnostic GPU performance, portability, and scalability.

LLNL participates in the ISC High Performance Conference (ISC24) on June 10–13.

A new just-in-time compilation approach leverages LLVM intermediate representation to optimize GPU kernels with portability to any GPU architecture.

LLNL scientists use AI to optimize antibodies against mutations and accelerate pandemic preparedness

Researchers from LLNL, in collaboration with other leading institutions, have successfully used an AI-driven platform to preemptively optimize an antibody to neutralize a broad diversity of SARS-CoV-2 variants.

People to Watch are at the forefront of HPC trends, adapting new technology to the rapidly changing world in order to unlock the answers to the biggest societal challenges of our time and make the impossible, possible.

Over the next three years, CASC researchers and collaborators will integrate LLMs into HPC software to boost performance and sustainability.

LLNL, Arizona State University and Michigan State University will dive deep into uncovering the compositions of 70 exoplanets through the Computing Grand Challenge Program, which allocates significant quantities of institutional computational resources to scientists to perform cutting-edge research.

The latest issue of LLNL's magazine explains how the world’s most powerful supercomputer helps scientists safeguard the U.S. nuclear stockpile.

LLNL's Bruce Hendrickson joins other HPC luminaries in this op-ed about the future of the field.