Three testbed machines for Lawrence Livermore National Laboratory’s future exascale El Capitan supercomputer — nicknamed RZVernal, Tioga and Tenaya — all ranked among the top 200 on the latest Top500 List of the world’s most powerful computers.

LLNL and Amazon Web Services (AWS) have signed a memorandum of understanding to define the role of leadership-class HPC in a future where cloud HPC is ubiquitous.

LLNL participates in the International Parallel and Distributed Processing Symposium (IPDPS) on May 30 through June 3.

Winning the best paper award at PacificVis 2022, a research team has developed a resolution-precision-adaptive representation technique that reduces mesh sizes, thereby reducing the memory and storage footprints of large scientific datasets.

Join LLNL at the ISC High Performance Conference on May 29 through June 2. The event brings together the HPC community to share the latest technology of interest to HPC developers and users.

Lawrence Livermore National Laboratory (LLNL) and the United Kingdom’s Hartree Centre are launching a new webinar series intended to spur collaboration with industry through

The U.S. Department of Energy’s (DOE) National Nuclear Security Administration (NNSA) today announced the award of an $18 million contract to Cornelis Network for collaborative research and development in next-generation networking for supercomputing systems at the NNSA laboratories.

Analyzing one of the largest databases of patients with cancer and COVID-19 with machine learning models, researchers from LLNL and the UC–San Francisco found previously unreported links between a rare type of cancer.

The Livermore Computing–developed Flux project addresses challenges posed by complex scientific research supercomputing workflows, and the team has played a major role in the ECP ExaWorks project.

An LLNL team has developed a comprehensive dynamic model of COVID-19 disease progression in hospitalized patients.

The Oppenheimer Science and Energy Leadership Program has selected materials scientist T. Yong Han and computer scientist Kathryn Mohror as 2022 fellows.

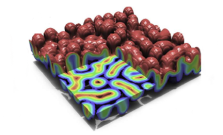

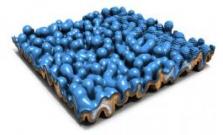

In the Multiscale Machine-Learned Modeling Infrastructure (MuMMI), the macroscale simulation runs a large system, with hundreds of proteins, at low resolution and machine learning decides which regions of the macro-model require investigation in a microscale simulation at much higher resolution.

Lawrence Livermore National Laboratory’s AI Innovation Incubator (AI3) will serve as the foundation for a cohesive view of AI for Applied Science, built upon LLNL’s “cognitive simulation” approach that combines state-of-the-art AI technologies with leading-edge high performance computing.

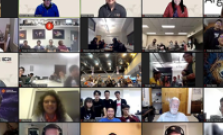

LLNL’s formidable presence at the annual Supercomputing Conference (SC21) included leadership of the Student Cluster Competition (SCC), which was held in a hybrid format. Computer scientist Kathleen Shoga served as this year’s SCC chair.

Under a newly funded HPC for Manufacturing project, LLNL will partner with steel and mining company ArcelorMittal to couple computer vision and machine learning methods with HPC resources to reduce emissions and defects from inclusions in steel manufacturing.

For the first time ever, the 2021 International Conference for High Performance Computing, Networking, Storage and Analysis (SC21) went hybrid, with dozens of both in-person and virtual workshops, technical paper presentations, panels, tutorials and “birds of a feather” sessions.

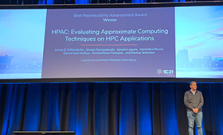

A suite developed by an LLNL team to simplify evaluation of approximation techniques for scientific applications has won the first-ever Best Reproducibility Advancement Award for approximation framework at SC21.

The DOE's Exascale Computing Project compiled a video playlist for Exascale Day on October 18 (10^18).

The renowned worldwide competition announced the winners of the 2021 R&D 100 Awards, among them LLNL's Flux workload management software framework in the Software/Services category.

LLNL will lend its expertise in vaccine research—most recently from designing new antibodies and antiviral drugs for COVID-19—and computing resources to the Human Vaccines Project consortium to aid development of a universal coronavirus vaccine and improve understanding of immune response.

To prepare for El Capitan and the next generation of supercomputers, construction crews and maintenance workers at LLNL have been hard at work since late 2019 and throughout the pandemic on a $100 million Exascale Computing Facility Modernization project.

The second Commodity Technology Systems contract will provide at least $40 million for more than 40 petaflops (40 quadrillion floating-point operations per second) of expanded computing capacity for the NNSA Tri-Labs (LLNL, LANL and SNL).

Using the zfp open source software, researchers can vary the compression ratio to any desired setting, from 10:1 (shown on the left) to 250:1 (on the right), where compression errors become apparent.

LLNL computer scientist Ignacio Laguna and team will examine one of the major challenges as supercomputers become increasingly heterogeneous — the numerical aspects of porting scientific applications to different HPC platforms.

Lawrence Livermore National Laboratory and The Data Mine learning community at Purdue University are partnering to speed up drug design using computational tools under the Accelerating Therapeutic Opportunities in Medicine (ATOM) project.