LLNL computer scientist Ignacio Laguna and team will examine one of the major challenges as supercomputers become increasingly heterogeneous — the numerical aspects of porting scientific applications to different HPC platforms.

Lawrence Livermore National Laboratory and The Data Mine learning community at Purdue University are partnering to speed up drug design using computational tools under the Accelerating Therapeutic Opportunities in Medicine (ATOM) project.

While our world class supercomputers garner a lot of attention (we have more computers on the top 500 list as of June 2021 than any other institution!), our use of these amazing machines is enabled by the codes developed to model and simulate complex physical phenomena on massively parallel archi

A paper in the Proceedings A of the Royal Society Publishing highlights findings by a Lawrence Livermore National Laboratory team on how nuclear weapon blasts close to the Earth’s surface create complications in their effects and apparent yields. The work is featured on the front cover of the pub

In this episode, guest Nisha Mulakken sits down with TDS to discuss COVID-19 detection and analysis.

Flux’s fully hierarchical resource management and graph-based scheduling features improve the performance, portability, flexibility, and manageability of both traditional and complex scientific workflows on many types of computing systems—in the cloud, at remote locations, on a laptop, or on next

Lawrence Livermore will participate in the ISC High Performance Conference (ISC21) on June 24 through July 2. The online event brings together the HPC community—from research centers, commercial companies, academia, national laboratories, government agencies, exhibitors, and more—to share the latest technology of interest to HPC developers and users.

LLNL, IBM and Red Hat are combining forces to develop best practices for interfacing HPC schedulers and cloud orchestrators, an effort designed to prepare for emerging supercomputers that take advantage of cloud technologies.

The DOE’s Exascale Computing Project (ECP) is accelerating delivery of a capable exascale computing ecosystem to provide breakthrough modeling and simulation solutions to address critical challenges in scientific discovery, energy assurance, economic competitiveness, and national security.

The high performance computing industry publication HPCwire named Bronis R. de Supinski, Lawrence Livermore National Laboratory’s chief technology officer for Livermore Computing, as one of its People to Watch for 2021.

COVID-19 HPC Consortium scientists and stakeholders met virtually on March 23 to mark the consortium’s one-year anniversary.

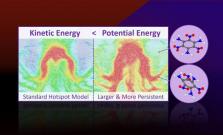

Molecular dynamics simulations predict that more potential energy is localized in hotspots than their kinetic energy (or temperature) would suggest.

Molecular dynamics simulations predict that more potential energy is localized in hotspots than their kinetic energy (or temperature) would suggest.

A near-node local storage innovation called Rabbit factored heavily into Lawrence Livermore National Laboratory’s decision to select Cray’s proposal for its CORAL-2 machine, the lab’s first exascale-class supercomputer, El Capitan.

IEEE, the world's largest technical professional organization, announced it has elevated Bronis de Supinski to the rank of fellow, recognizing LLNL's Livermore Computing chief technology officer (CTO) for his leadership in the design and use of large-scale computing systems.

To get a sense of the size and scope of the storage systems in use at Lawrence Livermore, we had a long conversation recently with Robin Goldstone, HPC strategist in the Advanced Technologies Office at Lawrence Livermore.

Sierra was one of six Lawrence Livermore National Laboratory supercomputers to make the latest TOP500 List of the most-powerful supercomputers in the world. Sierra held on to the No. 3 spot, achieving 94.6 petaflops on the High Performance LINPACK (HPL) benchmark.

Lawrence Livermore National Laboratory’s newest supercomputer, Ruby, a 6 petaflop Intel Xeon Platinum-based cluster, will be used for unclassified programmatic work in support of the National Nuclear Security Administration’s stockpile stewardship mission, open science and the search for therapeu

Listen to what’s coming in OpenMP 5.1 and beyond, how the C++ ecosystem is evolving, why Python in HPC, and have fun as these two razz each other.

Spack is now the deployment mechanism for the world’s top supercomputer with its Arm base, Fugaku, and has been and 1300 packages on the Summit machine were handled by Spack. It is expected to get further momentum with the future exascale systems as well.

Lawrence Livermore National Lab has been at the forefront of newer architectures over the last year in particular across multiple systems: Mammoth, expanded Corona, Lassen with Cerebras chip, and Ruby, a top 100-class all CPU (Intel Platinum) super.

The scientific computing and networking leadership of 17 Department of Energy (DOE) national laboratories will be showcased at SC20, the International Conference for High-Performance Computing, Networking, Storage and Analysis, taking place Nov.

Funded by the Coronavirus Aid, Relief and Economic Security Act, Lawrence Livermore's new 'big memory' performance computing cluster, Mammoth, will be used to perform genomics analysis, nontraditional simulations and graph analytics required by scientists working on COVID-19, including the devel

The San Joaquin Expanding Your Horizon’s Conference went virtual for the first time in its 28-year history. Pictured, LC's John Gyllenhaal presents The Magic of STEM workshop.

LLNL has installed a new artificial intelligence accelerator from SambaNova Systems into the Corona supercomputing cluster, allowing Lab researchers to run scientific simulations for inertial confinement fusion, COVID-19 and other basic science, while offloading AI calculations from those simulat